Servers in stock

Checking availability...

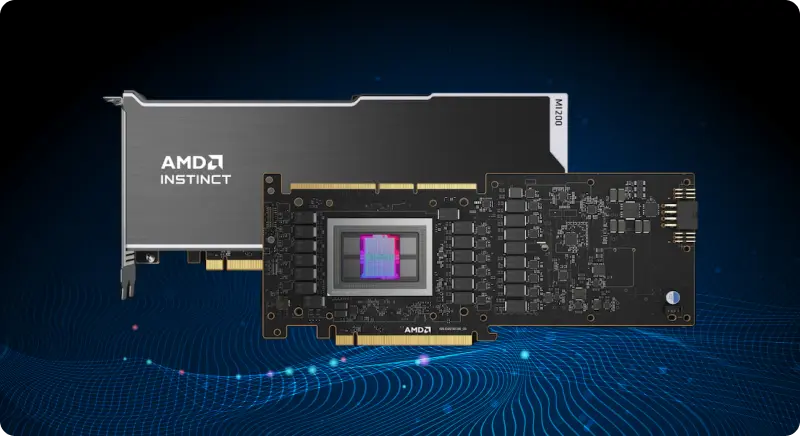

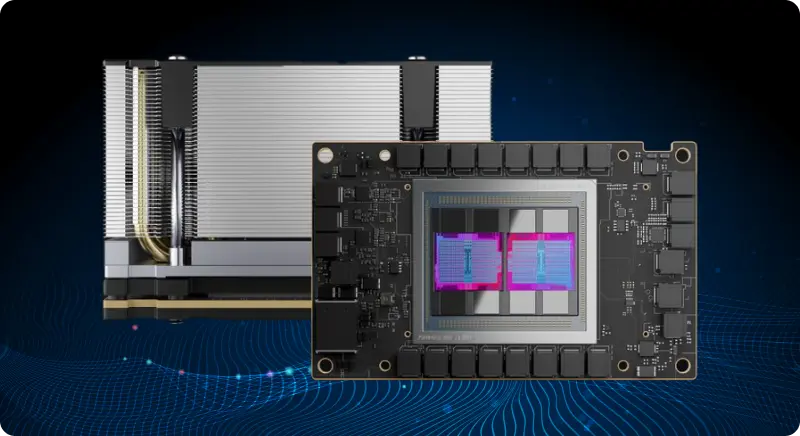

Deploy HPE enterprise-grade bare metal servers powered by AMD Instinct accelerators for machine learning, LLM inference, and high-performance computing workloads.

Engineered for artificial intelligence, machine learning, and large language model deployment. AMD Instinct GPU servers combine CDNA3 compute architecture with Zen 4 CPU cores and 192GB HBM3 unified memory for intensive AI training, inference, and HPC applications.

Enterprise-grade accelerators built on CDNA 2 architecture for exascale computing and AI workloads

| MI210 | L40S | A100 | H100 | |

|---|---|---|---|---|

| GPU Architecture | CDNA 2.0 | Ada Lovelace | NVIDIA Ampere | Hopper |

| GPU Memory | 64GB HBM2e | 48GB GDDR6 | 80GB HBM2e | 80GB HBM3 |

| GPU Memory Bandwidth | 1638 GB/s | 864 GB/s | 1935 GB/s | 3352 GB/s |

| FP32 | 22.63 TFLOPS | 91.6 TFLOPS | 19.5 TFLOPS | 51 TFLOPS |

| TF32 Tensor Core | 312 TFLOPS | 366 TFLOPS | 312 TFLOPS | 756 TFLOPS |

| FP16/BF16 Tensor Core | 181 TFLOPS | 733 TFLOPS | 624 TFLOPS | 1513 TFLOPS |

| Power | Up to 300W | Up to 350W | Up to 400W | Up to 350W |

| Loading... | Loading... | Loading... | Loading... |

Get answers to common questions about deploying and operating AMD Instinct GPU-accelerated bare metal servers for AI training, inference, and high-performance computing applications.